Life is strange and overwhelming at times.

So, my personal project work has been done behind curtains for the past year or so. Some reasons be personal, depressing, difficult to explain, hick-ups and/or related to a separation and I was starting to feel Anhedonic.

But, I have not been idle at all. I just took a detour that cost me months of work in my spare time to get working correctly. While not being able to work fast and be very productive, I still kept working on my project. Things that should have taken me a few days, took me weeks to see through. Lots of nights, thinking, loosing track of my own thoughts, thinking some more.

Needed to debug some things, basically find and fix all memory leaks in my library, but the debug performance of my library was so horrid that it was impractical to run my tests under such verbose configurations. So, I started by fixing most of the leaks with a lot simpler tests, but then found an old list of mine that had all the “tech debt” to fix when I have a nice few weeks of quiet. So, why not now?

I redid all my exact predicates to be as efficient as I could muster by using LLVM IR, hand optimized meta assembly with macros so that I could have the LLVM backend spit out all the assembly and binary for building the efficient routines for any platform LLVM supports. The lack of most programming languages is that they don’t support large integer math in a good way and usually it is clunky and generic. This is the first real example I have run into where it is actually easy to beat the compiler, because no compiler for any programming language supports this. And before you start mentioning RUST, you should take a look at the LLVM sources and features RUST relies on, and that it is using a very naieve n^2 expansions for integers where it requires you to sign/zero extend your small numbers to match the width of the ouput. Doing too much work for small operations. Anything bigger than 192 bits is doing too much redundant work.

I started by doing a lot of fiddling in assemlber. NASM, mostly. Wrote my intial big int math in assembler by hand, read Agners optimization manuals, but forced myself to step out of that rabbit hole and focus on using LLVM IR instead.

The results were quite nice.

A random box test that I have been using as one of my few dozen basic sanity tests ran in 70 seconds before I started all of this. And it was running at 240 secs the first time it was passing when this was all implemented in C#.

My large 4×4 determinant function that was previously using an open source library called ttmath, was replaced successfully and runs about ~26 times faster.

I encoded my calculation masks in one of the plane components to reduce the size of each plane to 32 bytes. Altered my hashing to combine hashes from the mask, C and D component to reduce most of the memory overhead of caching the hashes. Added some basic bounds tests for the polygons as well to help with simplifications.

At this point the same test was running in 21 secs.

The next thing I did, was to remove an old hack I created that used the BSP trees to clip of the polygons one by one, instead of interleaving the construction with the merging of the trees.

Now my cubes wrap up in 5.5 secs.

I remove all recursive functions and make them all iterative, improved the allocations and make the threading a bit saner.

My boxes are closing at 3.9 secs.

I augment the tree with all the polygon data so that I don’t have to do as many surface extraction from the tree to rebuild.

The test is now at 2.7 seconds.

I encode the constant planes I use for balance partitioning and world borders in 32 bits and it actually brings my test down the 2.3 secs. Via help from alignment and copying items to the stack.

* Now, I am implementing “Bruce Naylors, Good Tree Heuristics”. To have a log n depth to the tree AND an early out for quicker testing.

* Then I have to cache my large int sub determinants, to reduce the actual orientation computations to 4 multiplications and 3 additions. (A fun task).

But this will also have a few more cached things as well, such as the bounds of the augmented tree polygons, because simplifying the polys while merging the trees does help a lot with the efficiency of the following operations.

Caching the data will add a bit of an overhead to the memory since the subdeterminants for each linear combination for each point, could be at most 88 bytes and at least 24 bytes.

The list of tasks and things I changed is a lot richer than these few items above, but I have been using Superluminal to help with profiling the app and it has been very helpful to say the least.

So, what have you been doing for the past year that you don’t have anything to show for?

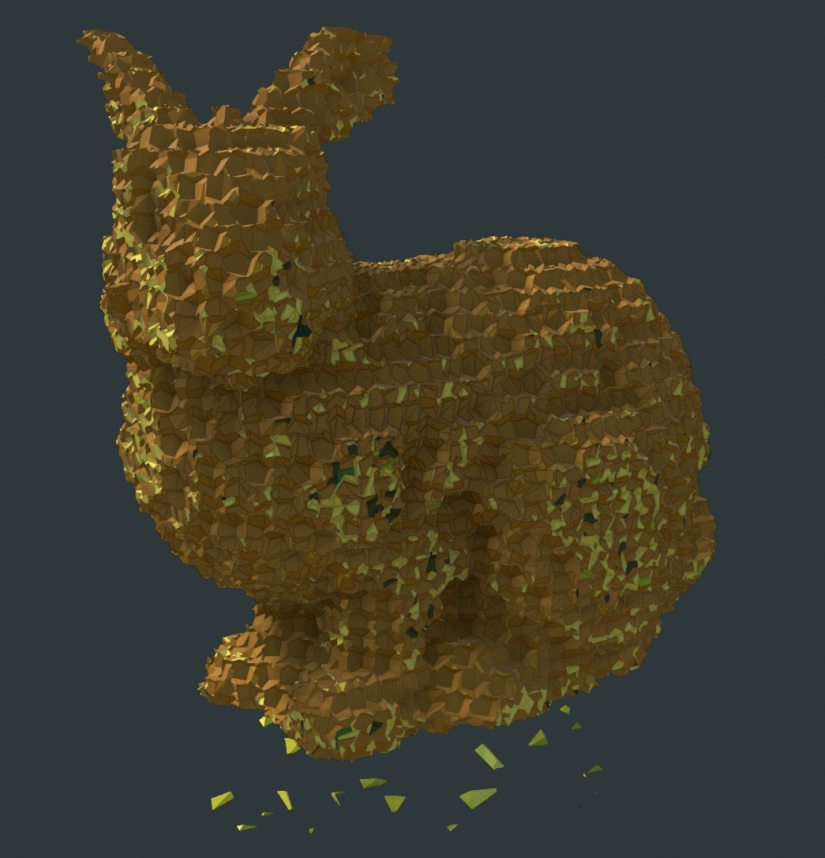

This old test of mine runs in 12 mins on my old mac book pro now. 10366 dodecahedrons subtracted from a simple cube. (900 ms now actually)